|

11/19/2023 0 Comments Google translate speech to text api

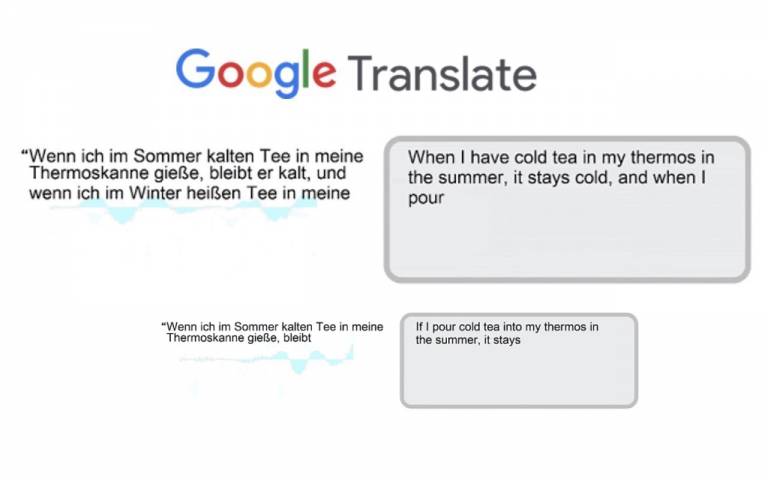

Looking at the enterprise side of the update, Google Cloud Speech API was launched in beta last year to improve speech recognition for everything from voice-activated commands to call center routing to data analytics. The company states that it will be bringing this to more languages and locations soon. Now people in the US speaking English can say “smile faced emoji” instead of typing the symbol or selecting the emoji from the repository. “This process trained our machine learning models to understand the sounds and words of the new languages and to improve their accuracy when exposed to more examples over time,” said Daan van Esch, Technical Program Manager, Speech, Google.Īpart from this update the company has also introduced voice dictation for emojis in US English. Interestingly Google states that it went a step further for the language integrations by working with native speakers for collecting speech samples, asking them to read common phrases. It will also be adding many Indian languages such as Gujarati, Tamil, Bengali among others, in a bid to make the internet more inclusive. Google claims that the speech recognition will also support ancient languages such as Georgian (first spoken in 430AD approx.), and also adding Swahili and Amharic, which are two of Africa’s largest languages. The update comes with 30 new language and locale integrations to the already existing voice typing feature which currently supports 89 languages in Gboard on Android, Voice Search, Google Translate and other Google apps. Search giant, Google, has introduced a major update to its Cloud Speech API, which was launched in 2016 for developers to transcribe speech to text.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed